Weakly Supervised Cyberbullying Detection in Social Media

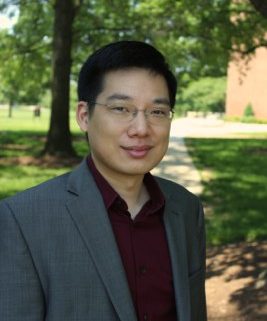

The following Great Innovative Idea is from Bert Huang, Assistant Professor of Computer Science at Virginia Tech. Huang presented his poster, Weakly Supervised Cyberbullying Detection in Social Media, at the CCC Symposium on Computing Research, May 9-10, 2016.

The following Great Innovative Idea is from Bert Huang, Assistant Professor of Computer Science at Virginia Tech. Huang presented his poster, Weakly Supervised Cyberbullying Detection in Social Media, at the CCC Symposium on Computing Research, May 9-10, 2016.

The Idea

One of my research topics that I’m most passionate about is on developing machine learning algorithms that detect cyberbullying in social media. Cyberbullying is a serious public health threat that is detrimentally shaping the online experience. And while Internet technology is rapidly amplifying our ability to communicate, it’s important to develop complementary technology to help mitigate the harm of such detrimental communication.

Computer programs that detect online harassment could allow automatic interventions, e.g., providing advice to those involved, but we don’t yet have machine learning algorithms that can handle the scale, the structure, and the rapidly changing nature of cyberbullying. Standard approaches for classification are hindered by the cost of labeling bullying examples and the need for social context to differentiate bullying from other less harmful behavior.

My group is developing machine learning algorithms that use weak supervision, where the input to the algorithm isn’t whether each interaction is bullying, but general indicators of bullying, such as offensive language. The algorithms try to extrapolate from that using social media data, considering who’s sending and receiving messages with the provided indicators, and the overall structure of the relationships in the data. The algorithms do collective, data-driven discovery of who is bullying, who’s being bullied, and what additional vocabulary is indicative of bullying.

Impact

Algorithms for cyberbullying detection can become a critical component of a holistic system to mitigate the harm of bullying. Such a system could include possible mechanisms like preventative filters, automated advice, and matchmaking for counseling. Various forms of bullying are linked to mental health issues, like depression and suicide. Cyberbullying may be even more dangerous. It can happen any time or anywhere, it can be persistent, and it can be very public. The toxic online social experience this can create is a serious health threat to Internet users, especially younger ones.

Phenomena like cyberbullying are different in nature and structure than things we’re used to modeling with machine learning, so there are important research problems to solve. As we solve them, we progress toward a world where computing can help improve peoples’ lives in a deeply social and personal manner.

Other Research

I direct the Machine Learning Laboratory in the Department of Computer Science at Virginia Tech. Our lab’s research focuses on machine learning for complex phenomena. Our research is driven by the fact that modern data comes from complex systems, such as social networks, where observations and variables are tightly dependent. We investigate theoretically justified algorithms for modeling and learning from such data. The underlying models we often work with include probabilistic graphical models and multi-relational graphs. New algorithms for working with these models enable large-scale applications in social network analysis, computational biology, and the Internet of Things—all examples of computing in complex environments.

Researcher’s Background

Since 2015, I’ve been an assistant professor at Virginia Tech. Prior to that, I was a postdoctoral research associate at the University of Maryland. I earned my PhD from Columbia University in 2011 and my undergraduate degree from Brandeis University.

Links

Check out my website at http://berthuang.com, and follow me on Twitter @berty38.